2026 AI LEARNING MANUAL: LEARNING, USING, NOT TOUCHING

It's better to judge what changes are worth following than blind anxiety

Original title: What to Learn, Build, and Skip in AI Agencies (2026)

This post is part of our special coverage Syria Protests 2011

Photo by Peggy Block Beats

The editor presses: the AI Agent field is entering a period of tool explosion and lack of consensus。

every week, new frameworks, new models, new benchmarks and new "10 times more efficient" products appear, but the really important question is not "how to keep up with all the changes" but "what really deserves to be invested."。

In the author ' s view, it is not the latest framework, but the lower capacity, that is, that is, the real long-term resilience in the current time when the technology warehouse is being rewritten: It's called "context engineering," tool design, eval system, Orchestra-subagent mode, sandbox and natural thinking. These capabilities will not quickly fail with the model, but will form the basis for building a reliable AIAgent。

The article further states that AI Agent is also changing the meaning of "Qualifications". In the past, academic qualifications, grades and years were entry passes; however, in an area where even giants were openly mistested, CVs were no longer the only document. What you did, what you delivered, is becoming more important。

Therefore, this paper is not just a discussion of what AI Agent learned in 2026, what he used, what he jumped over, but a reminder that, in times of increasing noise, the most scarce capacity is to judge what is worth learning and to continually make something really useful。

The following is the original text:

Every day, a new framework, a new benchmark, a new "10 times more efficient" product emerges. The question is no longer "How do I keep up" but: what's the real signal in there and what's just the noise in a sense of urgency。

Each road map, one month after its publication, may become obsolete. The frame you just got last quarter is now old. The benchmark that you used to optimize was painted and replaced quickly. In the past, we have been trained to follow a traditional path: a technology warehouse, corresponding to a cluster of themes and tiers; a series of work experiences, corresponding years and titles; and a slow step up. But AI rewrited this canvas. Today, as long as the hint is correct and aesthetically acclaimed, a person can deliver a job that an engineer with two years of experience needs to do。

PROFESSIONAL CAPACITY REMAINS IMPORTANT. THERE'S NO SUBSTITUTE FOR YOU SEEING THE SYSTEM GO DOWN, TWO O'CLOCK IN THE MORNING WITH THE MEMORY LEAKS, AND THERE'S NO SUBSTITUTE FOR THE FACT THAT YOU'VE BEEN ABLE TO GET OUT OF THE PUBLIC AND CHOOSE A BORING BUT CORRECT SOLUTION, AND IT'S PROVED RIGHT. SUCH JUDGEMENT WILL INCREASE IN VALUE. BUT WHAT DOESN'T ADD UP LIKE IN THE PAST IS YOUR FAMILIARITY WITH THIS WEEK'S HOT FRAME API SURFACE. SIX MONTHS LATER, IT MAY HAVE CHANGED AGAIN. THE REAL WINNERS TWO YEARS LATER WERE THOSE WHO HAD CHOSEN THE BASICS OF DURABILITY AND ALLOWED OTHER NOISES TO PASS。

i've been building products in this area for the last two years, getting more than $250,000 a year, and i'm now in charge of technology in a hidden company. if anyone asks me, "what should i care now?" that's what i'll send him。

This is not a road map. The Agent field has not yet been given a clear destination. Large factory laboratories are also in the open, pushing the return issue directly to millions of users, rewriting and online patches. If the team behind Claude Code can publish a version that causes 47% of performance to retreat, and until the user community discovers the problem, the idea of "a stable map exists below" is a fiction. Everyone's still searching. The opportunity for start-ups is precisely because giants do not know the answer. People who can't write codes are working with angents, delivering on Fridays something that the M.D. thinks is impossible。

the most interesting thing about this moment is that it changed our understanding of qualifications. the traditional paths are optimized by qualifications: degrees, junior positions, senior positions, senior posts, and posts that are slowly accumulated. this is justified when there is no radical change in the bottom area. but now, the ground below is moving at the same pace from everyone's feet. the gap between a 22-year-old, publicly released agent demo and a 35-year-old senior engineer is no longer a decade of skill accumulation. this 22-year-old and senior engineers are facing the same blank canvas. for them, the real recovery of growth is the willingness to deliver on a sustainable basis and the basic capacity that that fraction will not become obsolete within a quarter。

This is the core of the article. Next, I'll provide a way of judging which basic capabilities are worthy of your attention and which releases can be passed directly. Take whatever's right for you, put it down。

Really effective filter

You can't keep up with the new weekly announcements, and you shouldn't do that. What you need is not a flow of information, but a filter。

Five tests have been valid for the past 18 months. Let's go through these five questions before we get something new into your technology。

Is it important in two years

If it's just a shell, a CLI parameter, or "some version of Devin" outside a front-line model, the answer is almost always no. If it is a basic language, such as protocol, memory patterns, sandbox methods, the answer is more likely to be yes. The half-life of the shell product is short, and the half-life of the base language can be calculated on an annual basis。

Is there someone you respect who has made real products on the basis of it and has written experience honestly

MARKETING ARTICLES DON'T COUNT. A BLOG ENTITLED "WE TRIED X IN THE PRODUCTION ENVIRONMENT, AND THERE WAS A PROBLEM HERE" IS MORE VALUABLE THAN 10 ANNOUNCEMENTS. THE TRULY USEFUL SIGNALS IN THIS AREA WILL ALWAYS COME FROM THOSE WHO LOST A WEEKEND TO THAT END。

does that mean you're going to lose the existing tracing, retesting, configuration, certification

If so, it is a framework for trying to make itself a platform. Trying to be the platform framework, the mortality rate is about 90%. It's a good basic language that should be embedded in your current system, not forcing you to migrate。

If you skip it for six months, what's the price

For most publications, the answer is nothing. You'll know more in six months, and the winning version will be clearer. This test allows you to skip 90% of the release without anxiety. But it's the one most people refuse to use because skipping something makes you feel like you're behind. Not really。

can you judge whether it really made your angent better

If not, then you're just guessing. Without eval, the team runs by feeling, and eventually gets back online. With an eval team, you can tell yourself: On this specific load this week, is GPT-5.5 better or Opus 4.7 better。

If you only take one habit from this article, it's that every time you publish a new thing, write what you need to see in six months, it really matters. Then come back in six months to check. Most of the time, the question itself has given the answer, and your attention will be devoted to things that really make up for growth。

The real capabilities behind these tests are harder to name than any of them. It's an ability to be "simplified." This week in the framework of Hacker News fire, they'll have a cheerleader in 14 days, and they'll all sound smart. Six months later, however, half of those frameworks were no longer being maintained and the cheerleaders had already moved to the next hotspot. Those who are not involved, save their attention and leave it to those who have survived the "silentness" test after the heat has passed. It is the real professional skills in this field that restrain, watch and say, "I'll know in six months' time." The bulletins are read by everyone, but almost no one is good at not responding to them。

What to learn

Concepts, patterns, shapes of things. It's these things that really pay off. They can cross models, frameworks and paradigm shifts. Get to know them well, you can get any new tools in one weekend. If you skip them, you'll always be learning about surface mechanisms。

Context Engineering

In the last two years, the most important name change was "Prompt Engineering" to "Context Engineering". This change is real, not just a new one。

The model is no longer the one you write a smart command for it. It turns into something you need to assemble every step of the way to work. This context contains both system commands, tools, schema, retrieved documents, previous tool output, scratchpad state, and compressed historical records. Agent's behavior is the result of all the elements you put in the context window。

you need to internalize this: the context is the state. every irrelevant token consumes the quality of reasoning. the context rots, a real production failure. by the time of the eighth step of a 10-step mission, the initial target might have been buried by means of an output. a team that delivers a reliable agent will take the initiative to summarize, compress and tailor the context. they'll run the tool descriptions, they'll slow down the static part and they'll reject the change part of the cache. the way they look at context windows is like an experienced engineer looking at memory。

one specific way of feeling is to take an anent in any production environment and open the full track log. look at the context of the first step and look at the context of the seventh step. counting how many tokens are still working. you're probably embarrassed when you do this the first time. and then you're going to fix it, and the same agent will obviously become more reliable without changing the model, without changing the prompt。

If you read only one article about it, you read "Effictive Contact Engineering for AI Agencies." And then they read their repertoire on the multient research system, and the article gives figures about how important it is to segregate the context as the system expands。

Tool design

The tool is antent where your business comes into contact. The model selects the tool according to the name and description of the tool and determines how to try again based on the wrong information. The instrument's contract is consistent with the LLM's way of expressing it, and determines whether the model is successful or failed。

Five to ten well-named tools, more than 20 plain tools. Tool names should be like verbs in natural English. The description should spell out when it should be used and when it should not. The wrong message should be the feedback that the model can act on. More than 500 token ceilings, please summarize before trying. One of the teams in the open study reported that they had reduced the re-test cycle by 40 per cent by simply rewriting the wrong information。

Anthropic 'Writing things for goals' is a good starting point. After reading, add your own tools and observations to see the real mode of call. Agent's most reliable, almost always on the side of the tool. Many people keep shifting the prompt, ignoring where the real leverage is。

Orchestra-Subagent mode

the 2024 and 2025 debate over the multiagents culminated in an integrated programme that is now being adopted by all. nut-too-intent systems, i.e. multiple agents that write in a shared state in parallel, will fail catastrophicly, because errors will always be compounded. the extent to which single anent cycles can be extended is often further than you think. there is only one type of multiple agent that can actually work in a production environment: an orchestrator anent that assigns a narrow, read-only task to isolated subagent and then synthesizes their results。

Anthropic research system works like this. Claude Code's subagents work like this. Spring AI and most production frameworks are now standardizing this model. Subagent has a small and focused context and cannot modify the sharing status. Writing is the responsibility of orchestractor。

"Don't Build Multi-Agents" by Cognition and "How we build our multi-agent research system" by Anthropic seem to be the opposite view, but it's the same thing in different terms. Both are worth reading。

default to use single anent. only when a single agent does hit a real border will consideration be given to the orchestrat-subagent: for example, the context window pressure, the delay caused by the sequence tool call, or the mission heterogeneity does benefit from the focus context. it's a set of things that you don't need until you feel the pain。

Evals and Gold Data Set

every team that delivers reliable angent has eval. without eval's team, there's usually no reliable delivery. it's the most leveraged habit in the field and the least underrated thing i've ever seen in every company。

The effective approach is to collect the production environment trace, to mark cases of failure and to treat them as regression. Every time a new failure goes online, add it. The subjective part uses LLM-as-judge and the other parts use precise matching or procedural checks. Run the test packages before any prompt, model or tool changes. Spotify Engineering blog reports that their judge layer will stop about 25% of the agent output before the output goes online. Without it, one out of every four bad results reaches the user。

the mental model that really takes root is that eval is a unit test to make sure that angent does not deviate from his duties when everything else changes. the model will produce new versions, the framework will publish destructive changes and the supplier will discard an endpoint. your eval is the only thing that can tell you whether angent is still working. without eval, you're writing a system whose correctness depends on the goodwill of the moving target。

Eval frameworks, like Braintrust, Langfuse evals, LangSmith, are good. But they are not bottlenecks. The real bottleneck is that you first have a marked data set. The first day should start, before anything is expanded. The original 50 samples can be manually marked in one afternoon. No excuses。

Treat the file system as state, and Think-Act-Observe cycle

For any person who works on a genuine multi-step basis, the durable structure is: reflection, action, observation, repetition. Document systems or structured storage are factual sources. Every move is recorded and replayable. Claude Code, Cursor, Devin, Aider, OpenHands, Goose all condensed into this。

the model itself is non-state. the running frame must be in state. the file system is a state-based base language that every developer understands. once this framework is accepted, the whole discipline will naturally unfold: checkpoint, recoverability, sub-agent validation, sandbox execution。

and the deeper part here is that in any production that is worth paying the bills, it does more work than models. the model selects the next move, checking it, running it in the sandbox, capturing the output, deciding what feedback to return, deciding when to stop, deciding when to check point, deciding when to generate the subagent. switching models to another model of equal quality, a good harness still delivers products. and even the world's best model would produce an anent who would forget what he was doing at random。

if you build something more complicated than a one-time tool to call, then the place you really should spend time is harness. models are just one component。

MCP CONCEPTUALLY UNDERSTOOD

Don't just learn how to call MCP server. To learn its model. It creates a clear separation between the capacities, tools and resources of angent and provides a scalable authentication and transmission programme at the bottom. Once you understand this, the other "agent integration framework" you see is like a low-format version of the MCP, and you save time to evaluate them one by one。

Linux Foundation is now hosting MCP. All major model providers support it. It is now closer to the truth than sarcasm。

Sandboxing is a basic saying

every production grade is running in a sandbox. every browser anent has experienced indirect problem injection. every multi-tenant has a jurisdiction at some stage. you should use sandboxing as the original language of the infrastructure, rather than as a function to be added at the request of the client。

basic knowledge needs to be learned: process segregation, network export controls, key range management, and the authentication boundary between angent and the tool. teams that wait for customer security clearance to be replaced on an ad hoc basis often lose their transactions. teams that have been working on it since the first week will pass easily in the enterprise procurement process。

What to build with

The following are specific options as of April 2026. These choices change, but not too fast. On this floor, try to pick something that's boring but steady。

Layer

LangGraph is the default option in the production environment. About a third of the large companies that run angent are using it. Its abstract approach corresponds to the true shape of the angent system: the status of the type, the condition side, the lasting workflow, and the human-in-the-loop check point. The disadvantage is to write it up; the advantage is that when an individual actually enters the production environment, you really need to control these things, and its chatter corresponds to these controls。

If you primarily use TypeScript, Mastra is the actual choice. It's the clearest scenario for this ecological mental model。

If your team likes Pydantic and wants security as a first class citizen, Pydantic AI is a reasonable greenfield option. It was released at the end of 2025 v1.0, and the momentum does exist。

For work with provider-native, e.g. computer use, voice, real-time interaction, Claude Agent SDK or OpenAgents SDK can be used in the LangGraph node. Don't try to make them the top layer of the isomer system. They're perfect for what they do。

Protocol Layer

MCP, NOTHING ELSE。

Put your tools together into MCP server. External integration is consumed in the same way. Now MCP has crossed the threshold: in most cases, before you need to build yourself, you can find a ready-made server. In 2026, a hand-written self-defined tool was added, largely for free。

Memory Layer

when selecting a memory system, choose not by heat, but by the autonomy of an individual。

Mem0 is suitable for chat personalization: user preference, light history. Zep Fits to a production-level dialogue system, especially in situations where the state will evolve and require physical tracking. Letta suits those who need consistency in a few days or even weeks of work cycles. Most teams don't need this; but the ones that really need it, they need it。

The common error is that there are no memory problems, but the memory framework first. Start with what the context window can accommodate, plus a vector database. You can only add memory to the memory system if you clearly state the pattern of failure it wants to solve。

observable and evals

Langfuse is the open source default selection. It can host itself, using MIT licences, to cover tracing, prompt version management, and base LLM-as-judge evals. If you're already a LangChain user, LangSmith integration will be closer. Braintrust is suitable for research-type eval workflows, especially those that require rigorous comparison. OpenLMetry / Traceloop is suitable for a multilingual repository that needs a vendor-neutral OpenTelemetry integration。

You need to have both tracing and evals. Tracing replied, "What did angent do?" Evals said, "Are you better than yesterday or worse?" No, don't go online. The first day, these items are fixed at a much lower cost than repairing them after running blind。

Runtime and Sandbox

E2B suitable for common sandbox code execution. Brownserbase with Stagehand, suitable for browser automation. Anthropic Company Use suits the scenario that requires real operating system-level desktop control. Modal is suitable for short-term surge assignments。

never run a code without sandboxing. an anent that has been broken by prompt injection, and if it runs directly in the production environment, the explosion radius becomes a story you never want to tell。

Model

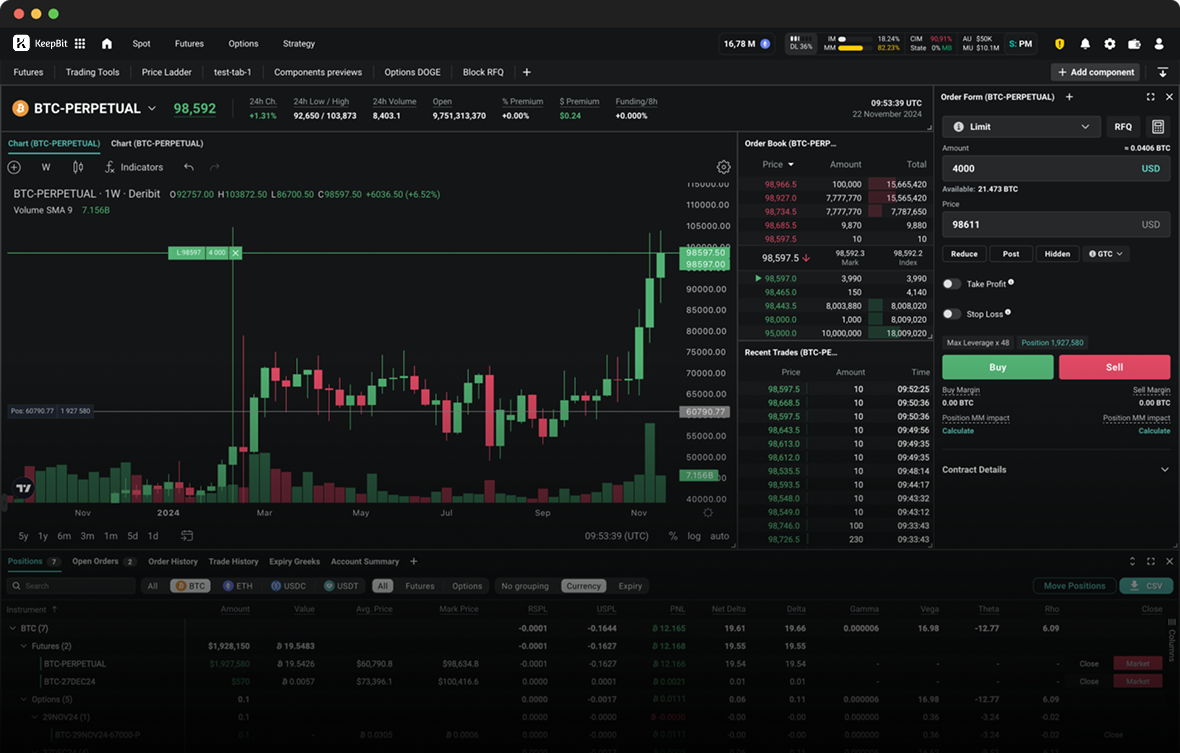

the pursuit of benchmark is exhausting and most of the time not very helpful. practically, as of april 2026:

Claude Opus 4.7 and Sonnet 4.6 Suitable tools for call, multi-step consistency, and elegant failure recovery. For most jobs loads. For most jobs, Sonnet is the sweet spot between cost and performance。

GPT-5.4 and GPT-5.5 are suited to the need for the strongest CLI/terminal reasoning capability, or the fact that you are living in OpenAI infrastructure。

Gemini 2.5 and 3 are suitable for context-intensive or multi-mode-intensive tasks。

When costs are more important than top-level performance, particularly when dealing with clear and narrowly defined tasks, DeepSeek-V3.2 or Qwen 3.6 may be considered。

models are considered replacement components. if your agent can only work on a model, it's not a moat, it's a bad smell. use evals to decide what model to deploy. re-evaluated every quarter, not every week。

What can I skip

You'll be constantly persuaded to study and use these things. Not really. The cost of skipping them is low and saves a lot of time。

AutoGen and AG2, not for production。

Microsoft's framework has shifted to community maintenance, with a stagnant pace of distribution and an abstract approach that does not correspond to the form that the production team really needs. Academic research can be done, but not on it。

CrewAI, not for new production construction。

it's everywhere, because it's perfect for demo. the engineers who actually built the production system are moving out of it. you want to make a prototype, but don't tie it for long。

Microsoft Semantic Kernel, unless you've been locked deep in Microsoft Enterprise Technology, and your buyer cares。

It is not the direction the ecosystem is heading。

DSPy, unless you specialize in large-scale optimization of prompt programs。

it has philosophical value, but its audience is very narrow. it is not a generic framework, nor is it a common frame。

use independent code-wringing anent as a structure selection。

Code-as-action is an interesting research direction, but it is not a default model in the production environment. You'll have many tools and security problems that your competitors may not have to deal with at all。

"Autonomous delegate."。

AutoGPT and BabyAGI are already dead on that product pattern. In the end, the industry accepted the honesty of "agentic engagement": supervised, bordered, assessed. In 2026, the people who were still selling the autonomous anent after deployment were essentially selling 2023。

Agent app store and marktplace。

since 2023, people have committed themselves to this, but never really got a business deal. enterprises will not buy generic prefabricated anent. they either buy vertical attachments to specific results, or build them themselves. don't design your business around an app story dream。

as a client, careful choice of horizontal "build any anent" business platform。

For example, Google Agespace, AWS Bedrock Argentinas, Microsoft Copilot Studio. They may be useful in the future, but they are still in disarray and slow distribution, and buy-versus-build's books usually tend to build a narrow anent or buy a vertical one. The exception is Salesforce Agentforce and Servicenow Now Assist, because they win in a workstream system that you are already using。

Do not follow SWE-bench and OSWorld rankings。

Berkeley researchers recorded in 2025 that almost all open benchmarks could be ranked without really solving the bottom task. Now the team will use Terminal-Bench 2.0 and its own internal evals as a more real signal. Default to remain suspicious of a single number of benchmark leaps。

naively parallels multiple angent structures。

five agents chatting about shared memory, and in demo it looks pretty good, and when it's done, it breaks up. if you can't draw a clear orchestrat-subagent map on a napkin and mark the reading and writing boundary, do not go online。

New agent products are not priced using per-seat SaaS。

the market has turned to outcome-based and usage-based. a seat fee would not only make less money for you, but would also send a signal to the buyer that you did not believe that the product would deliver。

The next frame you see on Hacker News this week。

Wait six months. If it still mattered, you'd know. If it doesn't matter, you save one move。

How do we move forward

if you're not just trying to keep up with angent, but you really want to use angent, the following order is valid. it's boring, but useful。

First, a result that is already important. Don't choose moonshot, don't come up and do a horizontal "agent platform" project. Choose something that your business is interested in, and measurable: reducing the number of passenger service orders, generating the first version of the legal review, filtering, generating monthly reports. Agent's success depends on an improvement in this outcome. It's been your eval target since day one。

this step is more important than any other step because it binds all subsequent decisions. with concrete results, the choice of framework is no longer a philosophical issue, and you will choose the framework that delivers this outcome as quickly as possible. the choice of a model is no longer a benchmark argument, but a choice of your evals to prove an effective model for this particular job. "we need no memory, subagents, custom history" is no longer an experiment of thinking, but is added only when a specific failure pattern is needed。

teams that skip this step often end up making a horizontal platform that nobody wants. a team that takes this step seriously usually delivers a narrow one that can return within a quarter. and this really online agent will teach them more than two years to read。

Before you go on line with anything, set up the Tracing and evals. Pick Langfuse or LangSmith, pick it up. Builds a small gold dataset manually if necessary. 50 labeled samples are enough to start. You can't fix what you can't measure. It'll be about 10 times the cost of the system。

Start with a single anent loop. Select LangGraph or Pydantic AI. Model selection Claude Sonet 4.6 or GPT-5. Give angent three to seven well-designed tools. Make it a file system or database as a state. Send it first to a small range of users, watch the tracks。

consider anent as a product, not a project. it will fail in a way you did not expect, and those failures are your road map. builds a return set with real production. every prompt change, model replacement, tool modification is passed prior to deployment. most teams underestimate the input here, and most reliability comes from here。

It's only when you've earned the right to expand the scope, then add complexity. When the context becomes a bottleneck, the subagents are introduced. Inserts a memory frame when the context of a single window cannot carry the required content. When the bottom API really doesn't exist, then introduce the command use or the Browner use. Don't design these things in advance. Let the failure mode pull them in。

Choose boring infrastructure. Tools use MCP. Sandboxes use E2B or Brownserbase. Status with Postgres, or you're already running data storage. Authentication and observability also follow existing systems to the extent possible. The strange infrastructure is rarely the true winner, the true winner is discipline。

From the very first day, we looked at the unit economy model. Each action cost, cache rate, retest cycle cost, model call distribution. Agent looks cheap in the PoC phase, but if you don't start monitoring outcome costs, it explodes when it's 100 times larger. A US$ 0.50 per run of a PoC could become US$ 50,000 per month at a medium scale. The team that doesn't see this in advance will have a CFO meeting that they don't like。

the model is reassessed quarterly rather than weekly. lock a quarter. at the end of the quarter, run the current front model with your eval suit. if the data indicate the change, the change is made. so you get the benefits of model advancement, while avoiding the confusion of chasing each release。

How to judge the tide

the following are specific signals that something may be true: a respected engineering team has written a digital postmorem, not just claiming how many people use it; it is a basic language, such as protocol, model, or infrastructure, not a shell or pack; it interacts with the system that you already run, not a substitute for it; its pitch talks about what it solves, not what it opens; it has been long enough to write a blog that "where doesn't work."。

The following are specific signals indicating that something may be just noise: 30 days later, there are still only demo videos and no production cases; benchmark jumps are not as clean as they are true; pitch uses "autonomous" "appent OS" or "build any occasion" without qualification; framework documents promise that you will throw away the existing tracing, auth and config; Star numbers are growing rapidly, but the numbers are not growing simultaneously; Twitter is fast but GitHub cannot keep up。

A useful weekly habit is to have 30 minutes on Friday to see this field. Read three things: Anthropic Engineering Blog, Simon Willison's notes, Latent Space. If there's postmorem this week, clean one or two more. The rest can skip. Something really important you won't miss。

What's next

the next two quarters are worth noting, not because they will win, but because the issue of whether this is a signal or not has not been fully resolved。

Parallel forking model for Reflit Agent 4。

this is one of the first options to seriously try "multi-agent parallel work" without being tripped by shared status. if it can hold back after size, this default pattern may change。

Outcome-based maturity。

The income trajectory of Sierra and Harvey has been validated in a narrow vertical area. The question was whether it could be extended to other areas or only to vertical scenarios。

Skills as a capability containment layer。

The growth of AGENTS.md and skills directories on GitHub indicates that a new way of encapsulating the ability of an individual is emerging. It is an open question whether it will be standardized at the capability level like the MCP standardized tool。

Claude Code, April 2026, mass retreats and resets。

one industry leading agent released a version that caused 47% of performance to recede, and it was first discovered by the user, after internal surveillance. this suggests that even in the lead, production-level practices are still very immature. if this thing drives the whole industry to invest better online evals, then this is healthy。

Voice becomes the default client interface。

Sierra's voice channels exceeded the text channels by 2025. If the model continues in other vertical areas, design constraints such as delay, interruption and real-time tool call-up become a first-class problem, and many existing structures need to be reworked。

open source model angent capabilities continue to reduce gaps。

DeepSeek-V3.2 Native support thinking-into-tool-use, Qwen 3.6 and wider open-source model ecology are of concern. The cost performance of the narrow angent mission is changing. The default advantage of the closed-source model will not be permanent。

Each of these things can answer a clear question: "What do I need to see in six months to believe it really matters?" This is the test. Track answers, not bulletins。

It's an unusual bet

Every frame you don't use is a migration you don't owe the future. Every benchmark you don't chase is a quarterly focus. Companies that are winning this cycle — Sierra, Harvey, Cursor, each in its own field — have chosen narrow targets, established boring discipline and then allowed noise in this area to pass through。

The traditional path is to choose a technology warehouse, take years to master it and then climb up the ladder. This works when technology can stabilize for a decade. But now, the technology vault is changing every quarter. The real winner no longer optimizes the ability to "take control of a technology warehouse", but rather optimizes taste, basic language and speed of delivery. They openly build small things and learn by delivering. Others were dragged into the room because they had made something. The work itself is a qualification。

Think about it carefully, because this is what the whole article really wants to say. The work model that most of us accept assumes that the world will be stable enough for long enough to allow seniority to grow back. You go to school, get a degree, climb the ladder. Two years here, three years there, and the resume slowly becomes something that opens the door. The premise of the whole machine is that it is sufficiently stable for the industry on its side。

but there is no stable "opposite" in the field. the company you want to join may be six months old. they may build a framework that is only 18 months old. underlying agreements may be only two years. half of the articles most frequently cited in this area were not even in this area three years ago. there are no ladders to climb, as the building has been transformed. when the ladders fail, the rest is an older way of making something, putting it on the internet, so that you can introduce yourself. this is an unusual path, as it bypasses the qualification system. but in a moving area, it is also the only path to truly resilient growth。

that's what we see from the inside. even the giants are in the open, publishing returns issues, writing flashbacks and online patches. some of the most interesting teams this year were not in this area 18 months ago. the person who wouldn't write the code is working with angent, delivering real software. doctors may be overtaken by those who choose the basic language and begin to move quickly. the door has been opened. most are still looking for applications。

You really need to develop skills, not "agents." Rather, it is a discipline to judge which jobs will benefit from growth in a changing field at the surface. Context increasing will increase. Tool design will increase in value. The Orchestra-subagent model is expected to increase. Eval Disciplinary will add value. Harness's thinking will boost growth. Tuesday just released the framework API wouldn't. Once you can distinguish them, the waves of new releases every week cease to look like stress and become noise you can ignore。

You don't have to learn everything. You need to learn what's going to grow and skip what's not going to grow. Pick one outcome. Catching and evals before you go online. Use LangGraph, or the equivalent of your team. Use MCP. Put runtime in the sandbox. Default starts with single anent. The scope is expanded only when the failure pattern pulls in complexity. The model is reassessed quarterly. Read three things Friday。

that's playbook. what remains is taste, speed of delivery and patience not to pursue irrelevant things。

Go build something. Put them on the Internet. This era rewards those who make things, not those who only describe them. Now it's the best window for the "real maker."。

[ Chuckles ]Original Link]